A mass media project with AWS and CodePipeline, a fintech that uses Azure and Jenkins, and a retail company with GCP and Circle CI. All of these projects (times 100) occur at Wizeline simultaneously, and with so much variety comes a challenge: How can we ensure DevOps-centered services for all our clients?

We have come up with a set of best practices to guarantee a cohesive quality outcome, regardless of each project’s differences: A nine-step guide on ensuring DevOps practices in a project or a product team with minimal overhead.

TL;DR: Create a Value Stream Map, understand your high-level architecture, use a public cloud provider, use containers, set up your cloud environments and infra as code, create a CI/CD and the continuous testing automation, set up good monitoring, document, and share the knowledge, make a plan for your team.

1. Create a Value Stream Map

First, make sure to have a current map of all your activities; this is called a process map. Then, take it further and convert it into a tool taken directly from Lean Manufacturing: a Value Stream Map.

The Value Stream Map focuses on the principle that “to improve something, first you must measure it.”

Therefore, you must understand what “value” in your project is. Value is any quality of your work—a service or product—for which the customer is willing to pay. When a step of your process transforms its input and takes it towards the final output, it generates value. These are the steps to create a Value Stream Map:

- Map all the activities, from feature requests to code in production.

- Add current times and expected times for each of the activities.

- Add who is responsible or the approver for each of the activities.

- Classify all activities in either plan, code, build, test, release, deploy, operate, or monitor.

After completing these steps, you can eliminate, reduce, or automate the activities that do not contribute value.

The following diagram is an example of a simple process map:

Fig 1: Process Map Example

Fig 1: Process Map Example

2. Understand your High-Level Architecture

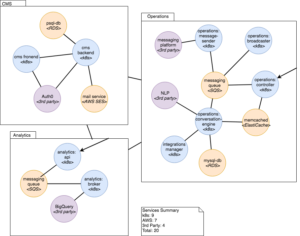

Create a Service Map to understand how your internal services communicate with other services.

The following diagram is an example of a simple Service Map:

Fig 2: Service Map Example

Fig 2: Service Map Example

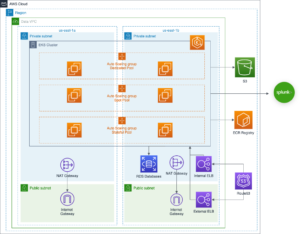

After creating your Service Map, create an Infrastructure Architecture Diagram of the entire solution to understand your limits, technologies, and possible bottlenecks.

The next figure is an example of an Infrastructure Architecture Diagram:

Fig 3: Infrastructure Architecture Diagram Example

Fig 3: Infrastructure Architecture Diagram Example

Pro Tip: The c4model is excellent for creating these diagrams. Start simple and then add more detail.

3. Use your Preferred Cloud Provider

The best approach to create modern applications is to use a public cloud. These managed services can escalate and provide a reliable infrastructure that will help you focus on your code without worrying about managing infrastructure at a deep level.

4. Select your Cloud Runtime: Containers

Container technologies are great tools to achieve integrated and replicable environments that are convenient and secure for deployment. To set up the local and test container environments:

- Start by dockerizing your service for your local development environment.

- Create a Docker Compose manifest to run all services locally. This step has excellent benefits since you can easily run databases or other services without installing applications on your machine and avoiding conflicting versions. Every application will run isolated in its container.

- Dockerize test execution and CI environment to enhance consistency and reproducibility.

There are other runtimes, but containers offer the best balance between benefits and flexibility.

Pro Tip: Ensure to follow the Twelve-Factor App Methodology for your application.

5. Set up the Cloud Environments and Infrastructure as code

Follow the next steps to set up your cloud environments and infrastructure as code:

- Create a repository or a directory within your service repository for your infrastructure as code (IaC) scripts. I recommend you separate the platform side and the application-specific side. The platform code is the infrastructure that some or all of your services share. It can be a Kubernetes cluster, a large RDS Database, or a centralized configuration service.

- Automate the IaC provisioning. Its security benefit: no credentials are shared or created because it runs on an automated build system. Its productivity benefit: you can trigger the job from a website without setting up a local environment. Some of my favorite tools for these jobs are Atlantis, Terraform Cloud, and Github Actions.

- Create the Terraform module for the environment. As with any code, we want to reduce duplication and increase cohesion and modularization. By creating the environment module, we can easily replicate it for our development, test, and production environments.

- Instantiate each of the required environments. Now we can use the previously created module to create our cloud environments.

6. Create the CI/CD

Continuous Integration and Continuous Delivery/Deployment are at the core of the DevOps practices, connecting many software development life cycle activities. These are incremental steps you can take:

- Create the CI jobs for each of the services. CI makes sure we do not introduce bad code or failing test cases to our main branch.

- Create the CD pipeline for each of the services. The main component of a CD pipeline is to automate the application deployment process. But this does not stop here; proper tests and multiple environments are essential for a healthy CD.

- Add meaningful and actionable notifications to the CI/CD.

- Add and extend tests to the CI/CD pipeline to increase the confidence of every change.

7. Implement Continuous Testing

To implement continuous testing in your process:

- Dockerize the UI, integration, and sanity tests execution. We get the same benefits as when dockerizing our application; developers can run tests in different environments manually or by automation without worrying about preparing the environment and dependencies.

- Parameterize the test execution. Tests should be treated as applications and follow similar principles. By parameterizing our tests, we can run them against different environments and configurations.

- Add test data and reports to a data store like s3. By using the parameterization in the previous step and the decoupled data, we can use different data sets. Store the reports to generate metrics like coverage, number of tests, and test execution time.

- Create a job to execute the tests on-demand for any environment and integrate it with existing CI/CD.

8. Monitor

Monitoring is at the base of the site reliability pyramid of needs. Whenever the application fails or has issues, the development team must debug and troubleshoot themselves. Having proper monitoring enables it.

- Add log aggregation to all the services in the environments.

- Add some other kind of monitoring—tracing, metrics, security scanners, APM—as needed.

9. Share the Knowledge

Having a system that is not visible to everybody is not helpful. As important as the tests or the CI/CD, we require proper documentation and knowledge-sharing in place.

When completing your system, ensure that everybody in your team:

- Knows how to deploy (using the automated setup).

- Knows how to run and debug the CI (using the automated setup).

- Knows how to run the integration and UI tests (using the automated setup).

- Knows how to access the logs and monitoring systems.

- Documents and communicates the Value Map, architecture, and infrastructure to all project members, along with new decisions.

- Creates playbooks on how to troubleshoot production issues and runbooks for everyday operations tasks.

- Documents and shares the lessons learned during the process.

Recommendations

Follow these recommendations for a successful implementation:

- Keep a standard interface whenever possible. For example, having makefiles where you call all the build tasks by make build.

- Keep your task decoupled from the CI tool; this makes it easier to develop, test, debug, and run locally.

- Use managed services to minimize maintenance costs, especially on projects that do not expect a dedicated SRE or Operations Engineer.

- Start simple. Run one test case, deploy to one environment, draw some circles and lines on a whiteboard, iterate, and incrementally improve from there.

- It is ok if you create the first infrastructure iterations manually using the console since it makes exploration faster. After that, you can code your findings.

- Git is your friend. Version control all your code, infrastructure, configuration, and documentation.

Now that you know all this information, make an implementation plan for your team. It does not need to be complex or made with fancy tools. A simple list in a spreadsheet is sufficient. Identify all the tasks missing to cover the steps mentioned in this guide. It is essential to assign efforts and owners to each activity for proper accountability and estimate completion dates.

There is an overwhelming number of DevOps technologies and articles out there. Don’t let them distract you; no tool will do these steps for you. Just keep in mind: focus on value and reduce waste. The rest will follow!

If you have any thoughts related to your experience, I would love to hear about them in the comments below.

Fig 1: Process Map Example

Fig 1: Process Map Example

Fig 3: Infrastructure Architecture Diagram Example

Fig 3: Infrastructure Architecture Diagram Example