Microservices the old fashioned way

To talk about microservices, we need to go back and understand the necessity of them. Back in the day, development and production teams deployed monolithic applications in one piece of bare metal servers. We installed dependencies, databases, cache servers, among others.

Deploying applications in just one place was common until it became necessary to have highly scalable, flexible, and reliable solutions.

These solutions help build successful businesses delivering solutions for thousands or millions of users simultaneously.

Breaking the ice

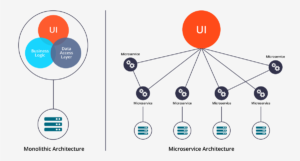

Figure 1. Side by side of a monolithic and microservices architecture

Figure 1. Side by side of a monolithic and microservices architecture

As shown in Figure 1, the classic monolithic application gathers the UI, business logic, and the data layer in the same server. Nowadays, this approach is recommended for small applications or proof of concepts. The amount of data involved in productive modern applications is so huge that one simple error could lead to a significant business loss.

On the other hand, microservices break the ice of the monolith and divide it into small pieces. Some of those pieces could handle the business logic while others access the data layer creating a scalable, flexible, and reliable solution.

What do we get from this approach?

One of the objectives of implementing microservices is to split the load of work assigned to one server. With a monolithic architecture for a small application, you will need big instances to handle the workload, like t3.large or t3.xlarge. This also depends on the application needs. Paying for these kinds of instances during large periods can significantly impact the revenue of the business.

Dividing our applications’ workload into small instances that are focused on specific tasks can allow us to use cheaper instances with less load. These can be scheduled for a short time or run 24/7 to save money from the budget for other initiatives to help the team do a better job.

One of the main concepts related to microservices is serverless, and AWS has a service called Lambda. The idea of using a runtime environment on demand that can handle thousands of requests per second is a powerful development tool because it gives more flexibility than an EC2 instance for just a fraction of the price. We can scale it up to improve the reliability of our solutions. Though it sounds great, there are some gaps we need to fill, like limited disk space for dependencies, and our task can timeout before it finishes. We need to assess our needs to choose the best solution as Lambda won’t always be the best fit because we are attached to a specific runtime environment. What if we want a full-stack environment for running complex or long tasks like image processing or report generation?

How can AWS help?

AWS innovates with tools that fit the actual necessities to build and deploy modern applications with enough computing power and capabilities to perform any task.

One of those tools is Amazon Elastic Container Service (ECS); using Fargate launch model, it’s convenient to package your application using Docker. That way, you have full control of your stack to add dependencies that can help you create more complex and specialized tasks that are not restricted to a specific timeout.

With Fargate, you pay for what you use. You can configure your services with the right amount of virtual CPUs (vCPUs) and memory that your app needs, so if you need a heavy task executed every day for just 1 hour, you can invoke that task, and all the resources are cleaned up after the task is done.

Using the AWS infrastructure provides easy and secure ways to use other services, like RDS or DynamoDB, to store and read data. In a matter of minutes, we can have a CRUD API to handle RESTful on demand.

One of the most important perks is implementing this approach to provide rolling update deployment out of the box. Whenever you need to deploy the new version of your application, ECS Fargate takes care of your users to avoid downtime in your application.

Overall, this technology could be the next step for on-demand and specialized tasks that save money for our companies or personal projects. The days of spinning up full instances are almost gone for the majority of modern web applications. If we look into the future, we will push the limits to achieve more scalable, flexible, and reliable solutions to handle these on-demand specialized tasks.